Advances in biomedical analytics and AI have revolutionized modern healthcare. Predictive systems in this field allow for better medical and epidemiological research, as well as assist in tailoring proactive healthcare plans that can save lives. But the rise of automation in healthcare research and treatment comes with the challenge of maintaining patient privacy. As a team dedicated to responsible innovation, Inpher is pioneering the state of the art cryptographic techniques that can secure and protect privacy for patient data used in biomedical AI development — all without compromising performances and accuracy.

The Inpher team led by Dr. Georgieva, Dr. Gama, and Dr. Carpov won the top spot in the iDASH Secure Genome Analysis Competition last month for pioneering advances in healthcare and genomics privacy. Inpher’s Secret Computing technology was specifically recognized for its Fully Homomorphic Encryption (FHE) technology and Federated Learning with Secure Multi-party Computation (MPC) while competing against 115 total registered teams.

About iDASH Privacy & Security Workshop

For the past seven years, the iDASH Workshop has served as a bridge between data privacy, AI/ML, and biomedical research. Every year, to spearhead innovation in the privacy-enhanced ML field, iDASH invites leading research labs and pioneering companies to solve complex ML problems in privacy-sensitive genomics use cases without leaking any confidential information. In fact, it is often touted as the Kaggle for privacy-enhanced Machine Learning.

There are three tracks for the 2020 competition:

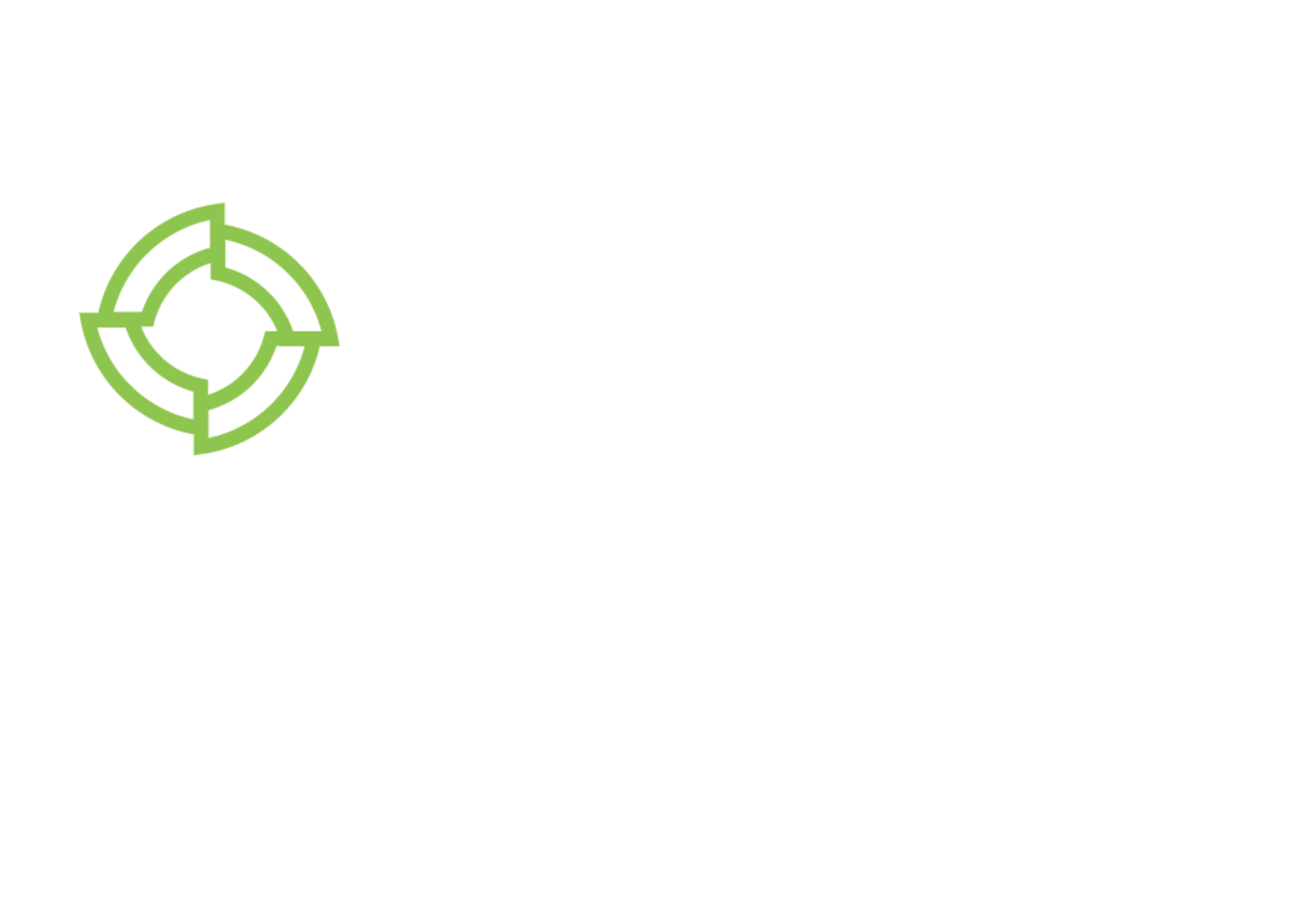

1. Secure Multi-Label Tumor Classification using Homomorphic Encryption

2. Privacy-Preserving Single-Cell Data Clustering in Intel SGX

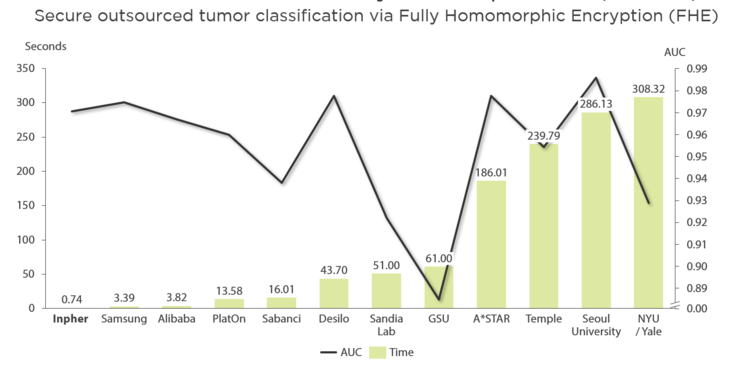

3. Differentially Private Federated Learning for Cancer Prediction

Inpher wins iDASH for 2 years consecutively.

Inpher continues to push the boundaries of Secret Computing by winning first place in both track 1 and track 3 it participated in this year.

In track 1, iDASH explicitly recognized Inpher’s Homomorphic Encryption for model prediction with one of the highest accuracy (97.05%) while taking the lowest prediction time (0.75s). Inpher’s high score for analysis speed and accuracy was part of a four-way tie for first place along with Samsung, Seoul National University, and Desilo. Homomorphic encryption allows AI models (trained with plaintext data) to be used for inference on encrypted data without decrypting them – but has an enormous computational overhead. With Inpher’s advancement in HE, healthcare organizations can finally develop their ML models faster using their privacy-sensitive data without ever exposing them.

In track 3, Inpher was recognized for delivering a Federated Learning-based Cancer Prediction Model that is resilient to model inversion attacks. This is specifically critical as healthcare organizations and hospitals explore ways to collaborate and develop highly accurate ML models without sharing their data. While Federated Learning has shown tremendous potential, they are often susceptible to model inversion attacks where unsecured intermediate results and model parameters can leak sensitive information. Using Inpher’s MPC cryptographic technique, we secured these intermediate results while delivering 100% model accuracy with the lowest runtime (0.69s).

Want to build secure, privacy-preserving ML models? Contact us.